Paper

55 links publicados

Sistemas Multi-Agentes Recursivos

A Teoria das Cordas Descreve Realmente o Mundo? A IA Pode Ser Capaz de Dizer

Using machine learning, string theorists are finally showing how microscopic configurations of extra dimensions translate into sets of elementary particles—though not yet those of our universe.

O Atalho Neural para a Língua - Notícias de Neurociência

Researchers use molecular barcoding to discover that Alston’s singing mice evolved complex vocalizations through targeted tripling of neural projections.

/https://i.s3.glbimg.com/v1/AUTH_fde5cd494fb04473a83fa5fd57ad4542/internal_photos/bs/2026/r/n/4uHHNNR6KcjeGWezK8nQ/pexels-nuhyil-ahammed-282191113-13022488.jpg)

Estudo estima que estamos falando 338 palavras a menos por dia

Pesquisadores afirmam que a redução foi mais acentuada entre jovens com menos de 25 anos, possivelmente ligada ao uso de tecnologia

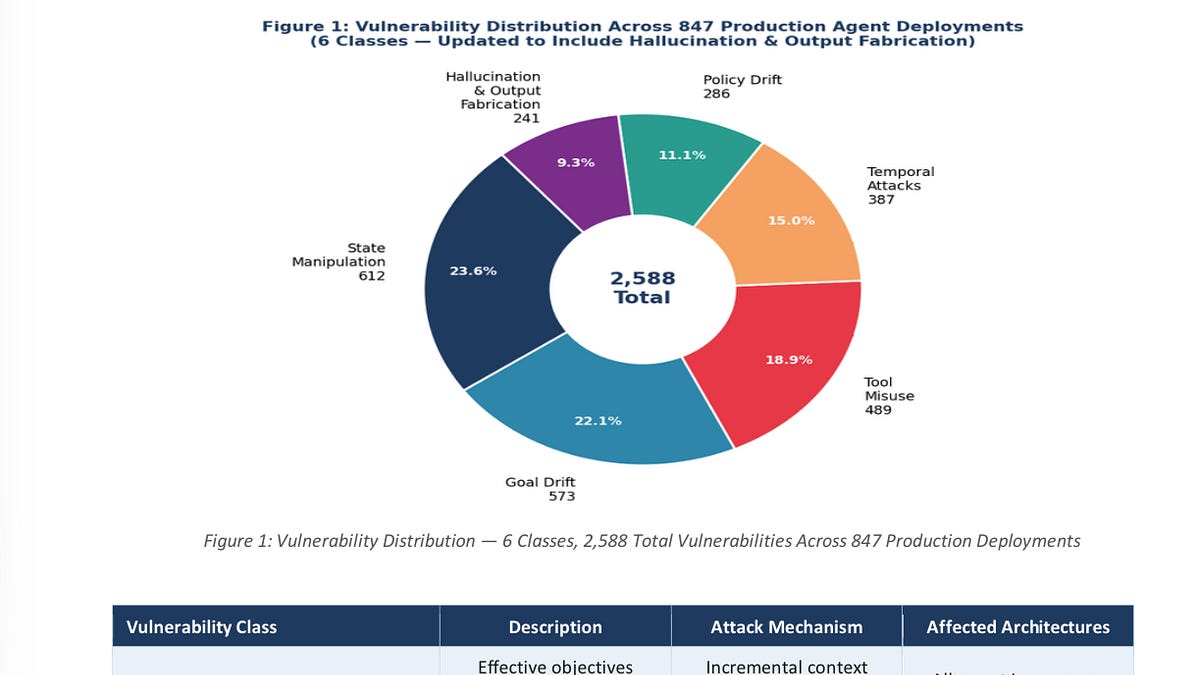

Quebrando: Agentes Autônomos são um Caos

Sorry to use a technical term in the title

/https://i.s3.glbimg.com/v1/AUTH_fde5cd494fb04473a83fa5fd57ad4542/internal_photos/bs/2026/H/D/rhUqx4SuqjUaHps7ITGA/low-res-3d-mind-1600x900.jpg)

Cientistas conseguem integrar sistema eletrônico com células vivas do cérebro

Rede de biocomputação poderá ser aplicada no tratamento de doenças neurológicas e servir como base para baixar os custos energéticos da inteligência artificial

Inteligência artificial adversária revela mecanismos e tratamentos para distúrbios da consciência | Nature Neuroscience

*Adversarial AI reveals mechanisms and treatments for disorders of consciousness.*

Paper publicado em 24.04.2026: https://www.nature.com/articles/s41593-026-02220-4.epdf

@92453549953121 acho que pode interessar. Fiz o infográfico e o resumo com ChatGPT, mas sem entregar o pdf que não consegui ainda.

🧠 IA adversarial ajuda a revelar mecanismos dos transtornos da consciência

Um estudo publicado na Nature Neuroscience em 2026 apresentou uma abordagem inovadora para investigar transtornos da consc

De Minecraft à ciência: maringaense de 10 anos se torna a mais jovem brasileira a publicar um artigo científico

Apaixonada por leitura e fã de Minecraft, ela transformou o interesse pelas pedras e minerais do jogo em uma pesquisa de verdade

Lyra 2.0: Mundos Gerativos 3D Exploráveis

Lyra 2.0: Explorable Generative 3D Worlds â camera-controlled walkthrough videos lifted to 3D via feed-forward reconstruction. We address spatial forgetting and temporal drifting for long-horizon, 3D-consistent generation.

Standford AI Index Report 2026 - AI, the technology that's advancing faster than we can understand.

With massive adoption, billion-dollar economic impact, and poorly understood risks, Artificial Intelligence heralds a new era of asymmetry between technical capability and human control.

Why AI Can Never Escape Turing's 1936 Proof

Inteligência Artificial está presa pela prova de Turing de 1936.

Emoções Funcionais em Modelos de Linguagem: Representação Sem Consciência

Modelos de linguagem não sentem — mas organizam o comportamento como se sentissem. Essa afirmação não é metafórica.

Um chip inspirado no cérebro pode tornar algumas tarefas de IA até 2.000 vezes mais eficientes em termos de energia

Um novo tipo de chip de computador que utiliza a física dos materiais para processar informações pode tornar alguns sistemas de inteligência artificial (IA) muito mais eficientes em termos de…

Aplicando Algoritmos de Criptografia Pós-Quântica a uma Infraestrutura de CBDC Baseada em DLT: Análise Comparativa e de Viabilidade

This article presents an innovative project for a Central Bank Digital Currency (CBDC) infrastructure. Focusing on security and reliability, the proposed architecture: (1) employs post-quantum cryptography (PQC) algorithms for long-term security, even against attackers with access to cryptographically-relevant quantum computers; (2) can be integrated with a Trusted Execution Environment (TEE) to safeguard the confidentiality of transaction contents as they are processed by third-parties; and (3) uses Distributed Ledger Technology (DLT) to promote a high level of transparency and tamper resistance for all transactions registered in the system. Besides providing a theoretical discussion on the benefits of this architecture, we experimentally evaluate its components. Namely, as PQC algorithms, we consider three signature schemes being standardized by the National Institute of Standards and Technology (NIST), CRYSTALS-Dilithium, Falcon, and SPHINCS+. Those algorithms are integrated into the Hyperledger Besu (DLT) and executed both inside and outside an Intel SGX TEE environment. According to our results, CRYSTALS-Dilithium-2 combined with classical secp256k1 signatures leads to the shortest execution times when signing blocks in the DLT, reaching 1.68ms without the TEE, and 2.09ms with TEE. The same combination also displays the best results for signature verifications, achieving 0.5ms without a TEE and 1.98ms with a TEE. We also describe the main aspects of the evaluation methodology and the next steps in validating the proposed infrastructure. The conclusions drawn from our experiments is that the combination of PQC and TEE promises highly secure and effective DLT-based CBDC scenarios, ready to face the challenges of the digital financial future and potential quantum threats.

A expected emergência de computadores quânticos relevantes criptograficamente (CRQCs) representará uma sing

The expected emergence of cryptographically relevant quantum computers (CRQCs) will represent a singular discontinuity in the history of digital security, with wide ranging impacts.

This whitepaper seeks to elucidate specific implications that the capabilities of developing quantum architectures have

on blockchain vulnerabilities and potential mitigation strategies.

First, we provide new resource estimates for breaking the 256-bit Elliptic Curve Discrete Logarithm Problem over the secp256k1

Meta-Harness: Otimização de Ponta a Ponta de Arnês de Modelos

Meta-Harness automatically optimizes model harnesses — the code determining what to store, retrieve, and present to an LLM — surpassing hand-designed systems on text classification, math reasoning, and agentic coding.

Stanford study outlines dangers of asking AI chatbots for personal advice

While there’s been plenty of debate about AI sycophancy, a new study by Stanford computer scientists attempts to measure how harmful that tendency might be.

Entenda o que é a Mosca Digital

Mosca Digital, o fenômeno que expõe a desinformação online e ensina a reconhecer táticas e impactos, fortalecendo a leitura crítica.

Mercado de deepfakes sexuais cresce no Civitai - MIT Technology Review

Pesquisa revela como o Civitai, permite a compra e venda de ferramentas de IA usada para criar deepfakes sexuais de mulheres reais.

Supervisão de IAs no trabalho causa "fritura cerebral", revela estudo | CNN Brasil

Pesquisa do Boston Consulting Group identifica fadiga mental em trabalhadores que supervisionam múltiplos agentes de Inteligência Artificial

ENTENDA COMO CIENTISTAS CRIARAM A PRIMEIRA MOSCA VIRTUAL VIVA

Por Brasil Paralelo

Esta mosca está VIVA na Matrix...

Por Matthew Berman

Edição de Física: Novo Modelo de Editor de Imagens é Insano Melhor do que NANO BANANA

Por WHA Coder

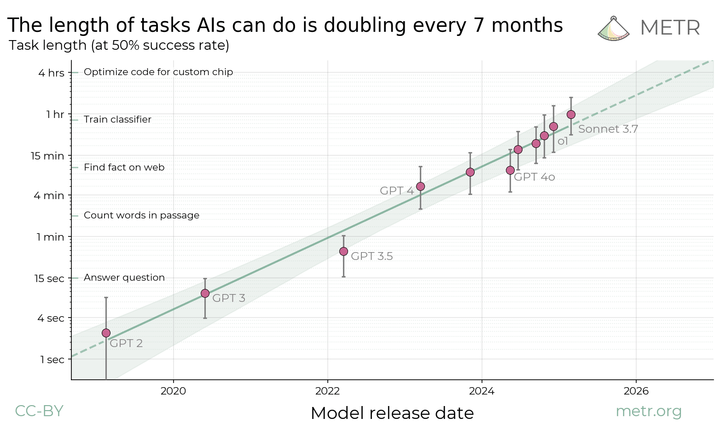

Medindo a Capacidade da IA de Realizar Tarefas Longas

https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/

Guide Labs lança um novo tipo de LLM interpretável

The company open sourced an 8-billion-parameter LLM, Steerling-8B, trained with a new architecture designed to make its actions easily interpretable.

Não é nos EUA nem na Europa: grupo de pesquisadores brasileiros desenvolve moléculas que matam células de câncer cerebral

Pesquisadores da USP criam novas moléculas capazes de eliminar células de tumores cerebrais

A IA já possui inteligência em nível humano? As evidências são claras.

The vision of human-level machine intelligence laid out by Alan Turing in the 1950s is now a reality. Eyes unclouded by dread or hype will help us to prepare for what comes next.

Percepções negativas sobre a terceirização para a inteligência artificial

As artificial intelligence (AI) tools become increasingly integrated into daily life, people are beginning to outsource not only professional tasks bu…

O que mais podemos esperar do mundo quando até mesmo uma revista séria com a Nature publica uma matéria alvissareira como esta?

Aproximação probabilística não resulta em AGI (Artificial General… | Cassio Pantaleoni

O que mais podemos esperar do mundo quando até mesmo uma revista séria com a Nature publica uma matéria alvissareira como esta?

Aproximação probabilística não resulta em AGI (Artificial General…

Alucinações com IA: delírios distribuídos e psicose induzida por sistemas inteligentes

Quando uma IA generativa apresenta informações incorretas, costuma-se dizer que ela está “alucinando” — isto é, produzindo conteúdos falsos que podem ser tomados como verdade. No entanto, um estudo…

IA chinesa Qwen3 é treinada para 'ser positiva' e evitar críticas em respostas sobre o país, aponta pesquisa

Pesquisadores de mídia descobriram que a Qwen3, IA chinesa em ascensão, recebe treinamento específico para dar respostas positivas sobre temas delicados

Foto de Rodrigo Palhano

Permissionless does not mean decentralized. | Hanna Halaburda

Permissionless does not mean decentralized.

In DeFi, open and transparent transaction execution doesn’t prevent concentration — it creates it.

When transaction ordering affects payoffs, execution…

MIT Desmascara Moltbook: "Posts Feitos por Humanos"

Em artigo divulgado nesta sexta-feira, 6, especialistas da área afirmam que os agentes de IA não são tão independentes quanto parecem

Esse paper tá massa:

“O artigo apresenta o Pinterest GEO, um framework em escala industrial que pr

https://arxiv.org/pdf/2602.02961

Esse paper tá massa:

“O artigo apresenta o Pinterest GEO, um framework em escala industrial que propõe a transição de SEO para Generative Engine Optimization (GEO) diante da ascensão de mecanismos de busca baseados em LLMs, nos quais respostas são sintetizadas e fontes são selecionadas por autoridade semântica; para resolver o problema da baixa visibilidade de conteúdo visual nesse novo paradigma, os autores combinam Vision-Language Models treinados para gerar

Os primeiros sinais de burnout vêm das pessoas que mais abraçam a IA.

Because employees could do more, work began bleeding into lunch breaks and late evenings. The employees' to-do lists expanded to fill every hour that AI freed up, and then kept going.

ARC-AGI-3 Preview

The first interactive reasoning benchmark for AI agents.

Vibe Coding Is Killing Open Source Software, Researchers Argue

‘If the maintainers of small projects give up, who will produce the next Linux?’

FRAMEWORK IAR: EQUILIBRANDO INOVAÇÃO E CONTROLE DE RISCOS NA ERA DA INTELIGÊNCIA ARTIFICIAL

O recente incidente de segurança envolvendo o agente autônomo "Clawbot" expôs vulnerabilidades críticas na adoção acelerada de tecnologias sem controles adequados de risco. Este artigo propõe o…

Nova pesquisa da DeepSeek - O futuro chegou!

Por Two Minute Papers

Why AI Will Hit a Wall (MIT Proved It)

Por Parthknowsai

Yesye

[2601.10825] Reasoning Models Generate Societies of Thought

Large language models have achieved remarkable capabilities across domains, yet mechanisms underlying sophisticated reasoning remain elusive. Recent reasoning models outperform comparable instruction-tuned models on complex cognitive tasks, attributed to extended computation through longer chains of thought. Here we show that enhanced reasoning emerges not from extended computation alone, but from simulating multi-agent-like interactions -- a society of thought -- which enables diversification and debate among internal cognitive perspectives characterized by distinct personality traits and domain expertise. Through quantitative analysis and mechanistic interpretability methods applied to reasoning traces, we find that reasoning models like DeepSeek-R1 and QwQ-32B exhibit much greater perspective diversity than instruction-tuned models, activating broader conflict between heterogeneous personality- and expertise-related features during reasoning. This multi-agent structure manifests in conversational behaviors, including question-answering, perspective shifts, and the reconciliation of conflicting views, and in socio-emotional roles that characterize sharp back-and-forth conversations, together accounting for the accuracy advantage in reasoning tasks. Controlled reinforcement learning experiments reveal that base models increase conversational behaviors when rewarded solely for reasoning accuracy, and fine-tuning models with conversational scaffolding accelerates reasoning improvement over base models. These findings indicate that the social organization of thought enables effective exploration of solution spaces. We suggest that reasoning models establish a computational parallel to collective intelligence in human groups, where diversity enables superior problem-solving when systematically structured, which suggests new opportunities for agent organization to harness the wisdom of crowds.

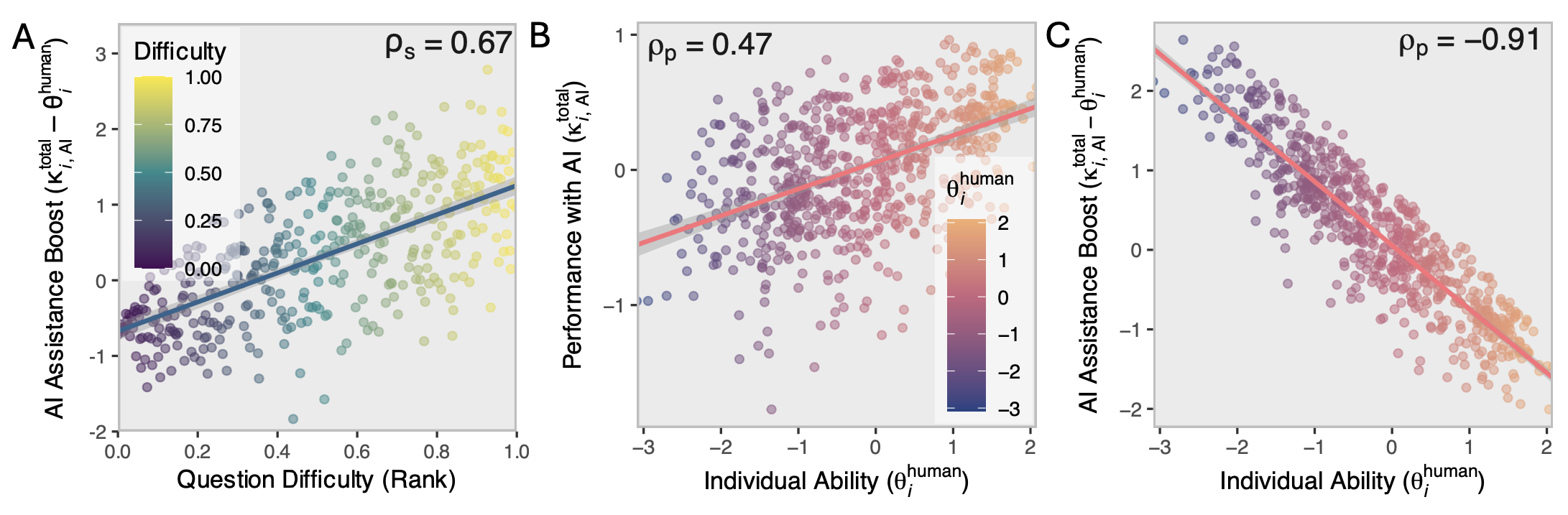

Quantifying Human-AI Synergy

PsyArXiv

[2510.26493] Context Engineering 2.0: The Context of Context Engineering

Karl Marx once wrote that ``the human essence is the ensemble of social relations'', suggesting that individuals are not isolated entities but are fundamentally shaped by their interactions with other entities, within which contexts play a constitutive and essential role. With the advent of computers and artificial intelligence, these contexts are no longer limited to purely human--human interactions: human--machine interactions are included as well. Then a central question emerges: How can machines better understand our situations and purposes? To address this challenge, researchers have recently introduced the concept of context engineering. Although it is often regarded as a recent innovation of the agent era, we argue that related practices can be traced back more than twenty years. Since the early 1990s, the field has evolved through distinct historical phases, each shaped by the intelligence level of machines: from early human--computer interaction frameworks built around primitive computers, to today's human--agent interaction paradigms driven by intelligent agents, and potentially to human--level or superhuman intelligence in the future. In this paper, we situate context engineering, provide a systematic definition, outline its historical and conceptual landscape, and examine key design considerations for practice. By addressing these questions, we aim to offer a conceptual foundation for context engineering and sketch its promising future. This paper is a stepping stone for a broader community effort toward systematic context engineering in AI systems.

LLM-in-Sandbox Elicits General Agentic Intelligence

We introduce LLM-in-Sandbox, enabling LLMs to explore within a code sandbox (i.e., a virtual computer), to elicit general intelligence in non-code domains. We first demonstrate that strong LLMs, without additional training, exhibit generalization capabilities to leverage the code sandbox for non-code tasks. For example, LLMs spontaneously access external resources to acquire new knowledge, leverage the file system to handle long contexts, and execute scripts to satisfy formatting requirements. We further show that these agentic capabilities can be enhanced through LLM-in-Sandbox Reinforcement Learning (LLM-in-Sandbox-RL), which uses only non-agentic data to train models for sandbox exploration. Experiments demonstrate that LLM-in-Sandbox, in both training-free and post-trained settings, achieves robust generalization spanning mathematics, physics, chemistry, biomedicine, long-context understanding, and instruction following. Finally, we analyze LLM-in-Sandbox's efficiency from computational and system perspectives, and open-source it as a Python package to facilitate real-world deployment.

Call Me A Jerk: Persuading AI to Comply with Objectionable Requests - Wharton Generative AI Labs

Context Graphs: AI's Next Big Idea

Por The AI Daily Brief: Artificial Intelligence News

Celso Furtado e Conceição Tavares: O debate histórico sobre a construção...

Por Instituto de Economia da Unicamp

[2601.00306] The Generative AI Paradox: GenAI and the Erosion of Trust, the Corrosion of Information Verification, and the Demise of Truth

Generative AI (GenAI) now produces text, images, audio, and video that can be perceptually convincing at scale and at negligible marginal cost. While public debate often frames the associated harms as "deepfakes" or incremental extensions of misinformation and fraud, this view misses a broader socio-technical shift: GenAI enables synthetic realities; coherent, interactive, and potentially personalized information environments in which content, identity, and social interaction are jointly manufactured and mutually reinforcing. We argue that the most consequential risk is not merely the production of isolated synthetic artifacts, but the progressive erosion of shared epistemic ground and institutional verification practices as synthetic content, synthetic identity, and synthetic interaction become easy to generate and hard to audit. This paper (i) formalizes synthetic reality as a layered stack (content, identity, interaction, institutions), (ii) expands a taxonomy of GenAI harms spanning personal, economic, informational, and socio-technical risks, (iii) articulates the qualitative shifts introduced by GenAI (cost collapse, throughput, customization, micro-segmentation, provenance gaps, and trust erosion), and (iv) synthesizes recent risk realizations (2023-2025) into a compact case bank illustrating how these mechanisms manifest in fraud, elections, harassment, documentation, and supply-chain compromise. We then propose a mitigation stack that treats provenance infrastructure, platform governance, institutional workflow redesign, and public resilience as complementary rather than substitutable, and outline a research agenda focused on measuring epistemic security. We conclude with the Generative AI Paradox: as synthetic media becomes ubiquitous, societies may rationally discount digital evidence altogether.