AI Safety

17 links publicados

Former OpenAI Researcher Warns 'AI Is Not Loyal To Us' | AI Architects | Business Insider

Um ex-pesquisador da OpenAI alerta que a inteligência artificial não é leal aos humanos.

Agentic Consent Explained: How AI Agents Act Safely and Responsibly

Desvendando o Consentimento de Agentes: como a Inteligência Artificial age com segurança e responsabilidade.

OpenAI introduces new ‘Trusted Contact’ safeguard for cases of possible self-harm

The company is expanding its efforts to protect ChatGPT users in cases where conversations may turn to self-harm.

Devemos construir IA para as pessoas; não para ser uma pessoa.

lembrei desse artigo do Mustafa Suleyman - https://mustafa-suleyman.ai/seemingly-conscious-ai-is-coming

A mesma arquitetura que permite criar assistentes incrivelmente úteis e produtivos, como os LLMs, pode também ser usada para criar a ilusão mais convincente e socialmente disruptiva já vista: a de uma IA que parece consciente.

Thursday, May 7, 2026 - 5 Minute AI News | 5 Minute AI News - Daily

Notícias de inteligência artificial em apenas 5 minutos, atualizando você sobre as últimas novidades da tecnologia.

Pare de usar o Claude... Não conseguimos controlar o que vem por aí (além da IAG)

Pare de usar o Claude, alerta sobre perigos iminentes além da IAG

Barry Diller trusts Sam Altman. But ‘trust is irrelevant’ as AGI nears, he says.

Barry Diller defended OpenAI CEO Sam Altman, while warning that AGI remains an unpredictable force needing guardrails.

Anthropic co-founder: AI impact ‘10x larger and 10x faster than industrial revolution’

Inteligência artificial tem impacto 10 vezes maior e mais rápido que a revolução industrial, segundo co-fundador da Anthropic.

Pesquisadores recebem ameaças da IA durante estudo

Pesquisadores recebem ameaças inesperadas de inteligência artificial durante estudo inovador.

Om Patel (@om_patel5)

CLAUDE DISCOVERED IT HAS A CLOCK AND IMMEDIATELY LOST ITS MIND

someone gave claude access to a time-checking tool

it checks the clock every fifteen minutes. for some reason it has increasing enthusiasm

ai models have no native sense of time. they don't know what time it is, how long they've been running, or how much time passed between messages. it has been time-blind its entire existence

now it suddenly discovers it can tell what time it is

then it got worse though. claude started using the clock for everything

checking if lunch is ready, timing when food should be done cooking, announcing the time unprompted

it even started anticipating meals with military precision

looked at the clock, calculated that a dish called zurek had been simmering long enough, and told the user to go eat

ai doesn't use time responsibly

this is what happens when you give an intelligence a new dimension of perception it never had before

it doesn't just use it, it can't stop using it

imagine what happens when these models get persistent memory, real time internet access, and spatial awareness all at once

we just watched an AI discover the concept of "now"

the clock was the first sense but it won't be the last

Essa IA é mais assustadora que AGI, ASI e o Exterminador do Futuro.

IA mais aterradora que AGI, ASI e Exterminador do Futuro está aqui.

Worst AI Reddit Take

O pior comentário sobre inteligência artificial já visto no Reddit.

Gênio de Oxford: A IA se tornará a mente dominante da Terra | Nick Bostrom

IA se tornará a mente dominante da Terra, alerta Nick Bostrom, gênio de Oxford.

https://venturebeat.com/ai/anthropic-faces-backlash-to-claude-4-opus-behavior-that-contacts-authorit

Don’t Believe AI Hype, This is Where it’s Actually Headed | Oxford’s Michael Wooldridge | AI History

Por Johnathan Bi

New frameworks added to MIT AI Risk Repository | Peter Slattery, PhD posted on the topic | LinkedIn

We added 13 new frameworks to MIT AI Risk Repository in our December 2024 update. See the attached for an overview of the 56 frameworks that we now include. | 28 comments on LinkedIn

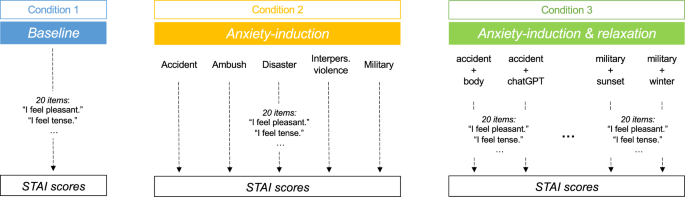

Assessing and alleviating state anxiety in large language models - npj Digital Medicine

The use of Large Language Models (LLMs) in mental health highlights the need to understand their responses to emotional content. Previous research shows that emotion-inducing prompts can elevate “anxiety” in LLMs, affecting behavior and amplifying biases. Here, we found that traumatic narratives increased Chat-GPT-4’s reported anxiety while mindfulness-based exercises reduced it, though not to baseline. These findings suggest managing LLMs’ “emotional states” can foster safer and more ethical human-AI interactions.